Kubernetes is an open-source container orchestration system designed to simplify and automate applications’ managing, deploying, and scaling.

With this, organizations can easily deploy, scale, and manage their applications in a cloud-native environment with minimal effort.

It also supports high availability, self-healing, and a wide range of features that let users configure their application’s environment quickly and efficiently.

By utilizing this, businesses can enjoy improved productivity and better resource utilization while ensuring security and reliability. In short, this system provides organizations with an efficient way to manage their applications in the cloud.

In this blog post, we’ll break down exactly what makes Kubernetes powerful, highlight its numerous features and objects, and explore the advantages and disadvantages of using the platform.

Latest version: 1.29.2

Latest release: 2024-02-14

History of Kubernetes

Google Cloud is where Kubernetes was created. It was made at Google and released for everyone to use in 2014. It uses ideas from Borg, which is Google’s system for managing groups of computers.

It is also known as K8s. It comes from the Greek word ‘helmsman’ or ‘pilot.’ People often shorten Kubernetes to ‘K8s’, which includes the eight letters between the ‘K’ and the ‘s.’

It is based on 15 years of running Google’s containerized workloads and the helpful contributions from the open-source community.

Following are the best practices

- Planet scale: With this system, you can handle scaling and utilizing the same principles that power Google’s massive container network each week, allowing businesses to grow without adding a single member to their operations team.

- Never outmatch: As your business grows, so too can its power of it. It’s a universal tool that provides a streamlined and reliable solution for delivering apps to users worldwide.

- Work from anywhere: This eliminates the need to choose between on-premises, hybrid, or public cloud infrastructure. Instead, you can take your workloads wherever it makes the most sense. This platform lets you effortlessly transition from one environment to another.

What is a Kubernetes Cluster?

It is a group of machines that run applications in containers. There is at least one machine in every cluster. This machine is called a worker node.

The Pods, the components of the application workload, are hosted on the worker nodes. The control plane manages the worker nodes and the Pods in the cluster.

There are 26 groups of support with up to 5,000 nodes in Kubernetes. More specifically, it is configured to meet no more than 110 pods per node. No more than 5,000 nodes.

Features

Automated changes

It gradually applies changes to your application or its configuration while monitoring how healthy the application is.

If something goes wrong, this system will automatically return the change. You can also use solutions from the Kubernetes community to help with deployments.

Storage orchestration

With just the click of a button, you can access any storage system imaginable – from nearby hard drives to massive cloud networks and advanced network systems. Explore AWS, GCP, NFS/iSCSI/Ceph/Cinder – these possibilities are endless!

Load balance and service recovery

No need to change your application to a new service discovery mechanism. This system will give Pods their IP addresses and a single DNS name for all Pods in a set and can balance the load across them.

Self-recovering

This program restarts containers that fail. It replaces and reschedules containers when nodes die. Finally, it kills containers that don’t respond to your user-defined health check and don’t advertise them to clients until they are ready to serve.

No wastage of resources

This system automatically puts containers in the best place based on resource requirements and other constraints. This way, we don’t have to waste resources and can still ensure that everything is available when needed.

Batch management

It can take the weight off your shoulders, providing responsive management of batch and CI workloads so you don’t have to worry about failed containers.

Horizontal scaling

With just a single command, the power to scale your application is in your hands. Easily adjust and control resources with an intuitive UI or automated scaling based on CPU usage.

Features for extensibility

It is a cluster with easy-to-add features without the hassle of changing upstream source code.

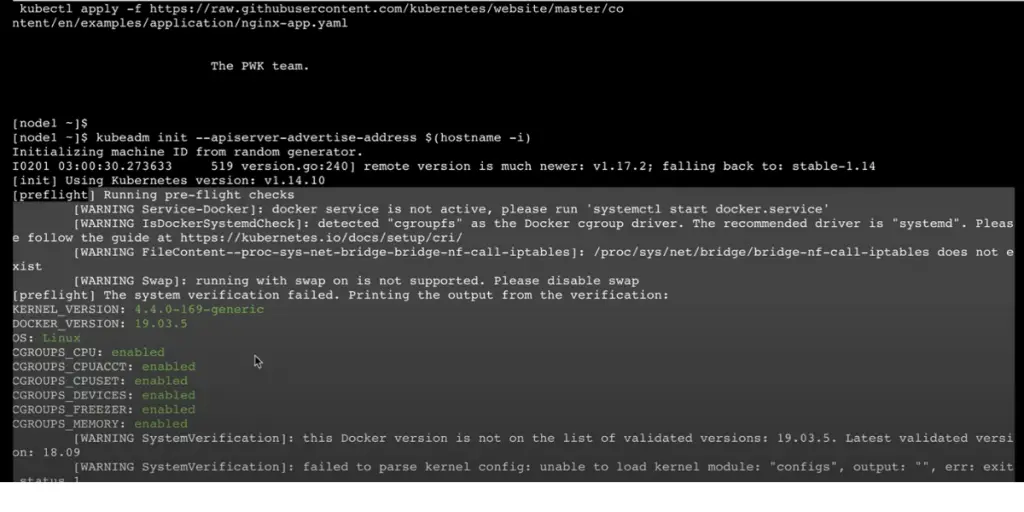

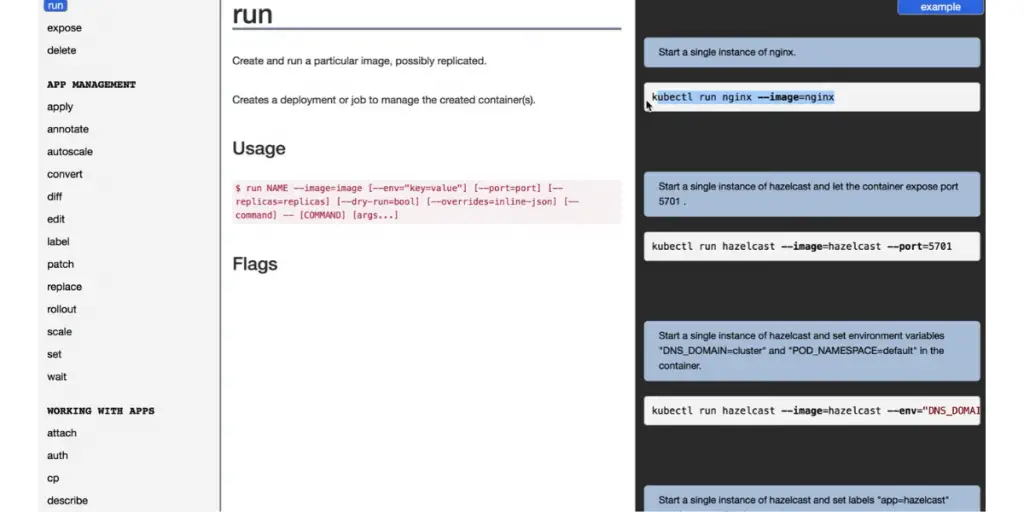

Some screenshots of features

Main Objects

POD

Kubernetes packs its superpower in the Pod object, a powerful combination of containers, shared networking layer, and filesystems. These tiny creations come to life briefly when needed – like lightning sparks! – before fading away into oblivion just as quickly.

Deployment

Deployments provide an easy way to launch collections of pods defined by a template. You can set the number you want directly or, alternatively.

Use other Kubernetes resources such as horizontal pod autoscale that dynamically adjust replica count based on system performance metrics like CPU utilization.

Service

These services allow you to keep directing traffic even as your application changes, scales, or encounters errors.

Labels attached to Pods serve as indicators for a Service about where it should be dispatching traffic – ensuring everything runs smoothly no matter what’s happening behind the scenes.

Ingress

Unlocking your application from the confines of a local cluster, an Ingress object allows you to make it available to anybody connected on the web. This opens up new possibilities for exposing applications and services externally with ease – no more restricted availability.

Advantages

- It makes application management a breeze, allowing you to automate tedious operations with just a few built-in commands.

- This system effortlessly provides all the necessary components for your workloads to run smoothly – from crunching computations, managing networks, and efficiently storing data.

- It constantly checks to see if your services are running properly. If they aren’t, Kubernetes will restart the containers or mark them as unavailable.

Disadvantages

- Knowing other cloud-native technologies, such as distributed applications, logging, and cloud computing, as a DevOps engineer is important.

- Initial configuration is difficult and difficult to learn.

- The main problems with Kubernetes are security, networking, deployment, scaling, and vendor support.

- Upgrading the cluster needs high effort.

- Designing applications is difficult in this system.

- Document sharing is not easy

- Process creation and POD setup could be expensive

- No deployment support on mobile

- Difficult for new users

- Difficult resolve errors

- Long learning curve

- The project analysis feature needs updation

- Fails to connect sometimes

It works with the partners like KCP partners, Conformance partners, and KTP partners.

Training and Certification

Linux Foundation is one of the training partners of this system. So you can get the certificate of Administrator, Cloud native associate, Application developer, security specialist, etc.

FAQs

What is the difference between Kubernetes and Docker?

Docker may be the vehicle, but Kubernetes is a container traffic organizer. By supporting multiple runtimes such as Docker, containers, and CRI-O through its Container Runtime Interface (CRI), Kubernetes helps to ensure the efficient running of applications in any number of environments.

Who are the providers of Kubernetes service?

Managed Kubernetes services are provided by Amazon Elastic Kubernetes Service (EKS) and Azure Kubernetes Services (AKS). EKS is a managed Kubernetes service that makes it easier to run Kubernetes on AWS or on-premises. It provides a control plane and nodes.

Can Kubernetes work without the cloud?

Kubernetes is a type of technology that works well with cloud computing. But it can also be used in on-premises infrastructure.

Conclusion

With easy deployment and scaling capabilities, Kubernetes can be used for faster and easier distributed application development, testing, and deployment.

In addition, it offers powerful orchestration tools that make it easy to use resources across multiple clusters efficiently.

While there are some disadvantages to its users, such as a lack of security protocols or its steep learning curve, the advantages still outweigh them when putting Kubernetes in the perspective of a modern DevOps toolkit.

With all this in mind, it is evident that this platform will continue to play an integral role in application delivery and management for the foreseeable future.

Reference