Many of you are searching for machine learning software that can streamline developing, deploying, and managing ML models in production.

MLflow is an open-source platform for managing the entire machine learning (ML) lifecycle. It provides tools for tracking, logging, and comparing experiments with various parameters and settings, making it easy to reproduce the results and collaborate with others.

It also enables users to package ML models into reproducible projects that can be deployed into any environment, allowing developers to roll out models across various platforms easily.

Its intuitive interface, simple setup, and robust features make it an excellent way to manage complex machine-learning projects.

This blog is designed to provide complete details of MLflow Machine Learning Software, its features, components, and alternatives.

New version: MlFlow 2.11.1

Release date: March 6, 2024

Details

MLflow is a platform that makes it easier to develop machine learning models. This includes experimentation, reproducibility, deployment, and a central model registry.

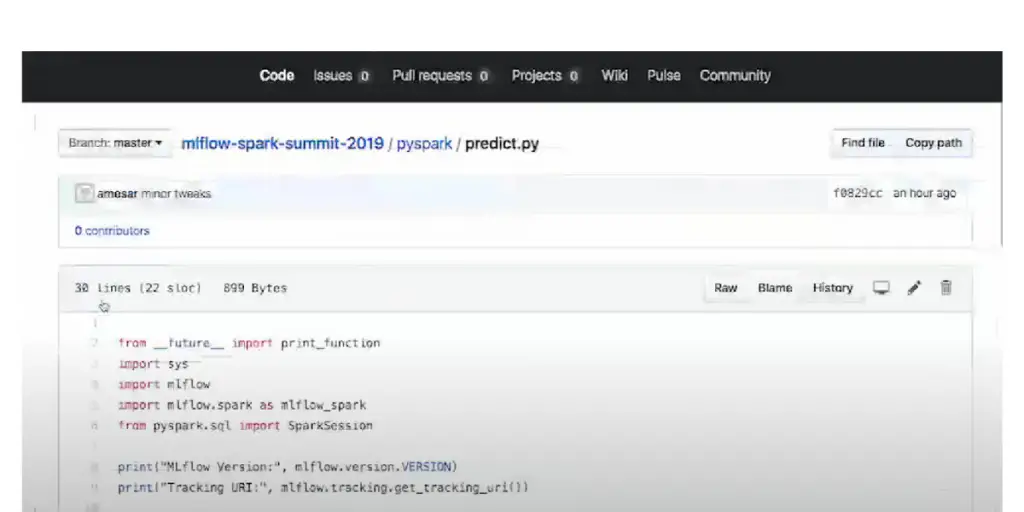

It has a set of APIs that can be used with any machine learning application or library, like TensorFlow, PyTorch, or XGBoost. As a result, you can use MLflow wherever you run ML code, whether in notebooks, standalone applications, or the cloud.

Features

- Work with any ML library and language and existing code.

- Designed for one user to large organizations

- MLflow effortlessly monitors experiments, compares parameters, and analyzes results.

- Scales to big data with” Apache Spark.”

- MLflow streamlines the deployment of machine learning models – instantly connecting cutting-edge libraries to any model serving and inference platform.

- MLflow enables data scientists to package ML code into reusable and reproducible modules, allowing them to share their work with colleagues or seamlessly deploy it in production.

Components of MLflow

It currently offers four components: It includes features like tracking experiments, packaging code into reproducible runs, and sharing and deploying models.

MLflow Tracking

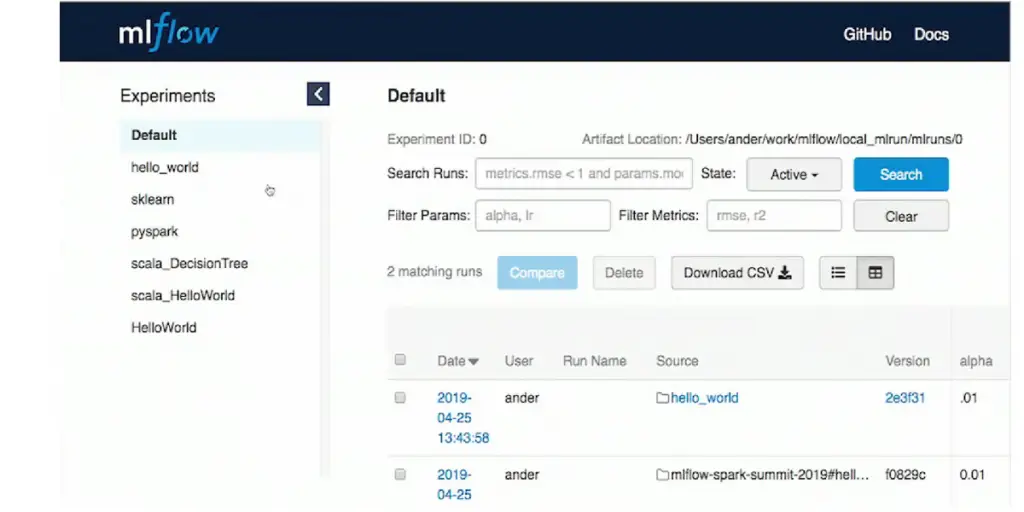

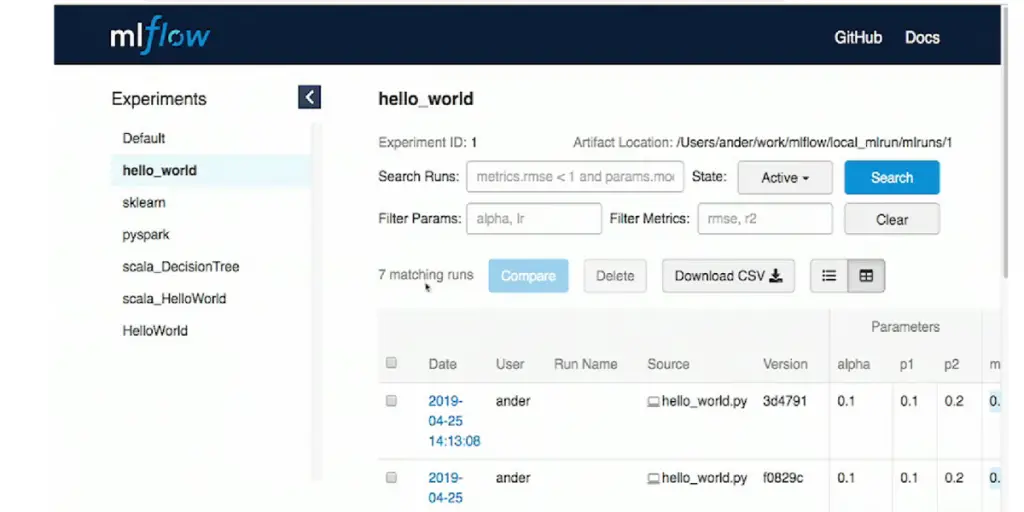

Tracking is an API and UI that lets you log parameters, code versions, metrics, and output files when running your machine learning code. You can log and query experiments using Python, REST, R API, and Java API APIs.

This system uses the concept of runs to keep track of executions of data science code. You can record the runs using MLflow Python, R, Java, and REST APIs from anywhere. For each run, it records the following information:

- Code version

- Start and end time

- Source

- Parameters

- Metrics

- Artifacts

To keep track of your progress remotely, set the MLFLOW_TRACKING_URI environment variable to connect it with a tracking server or call mlflow.set_tracking_uri() for an effortless experience. Types of remote Tracking URIs are

- Local file path where data is filed directly stored locally((specified as file:/my/local/dir))

- Database encoded as <dialect>+<driver>://<username>:<password>@<host>:<port>/<database>

- HTTP server (specified as https://my-server:5000)

- Databricks workspace(specified as databricks or as databricks://<profileName>

It provides various storage solutions for your runs and artifacts, from local file systems to remote tracking servers. Its backend store captures the run information, while artifact stores keep track of output files associated with each experiment.

This comprehensive system lets you quickly and easily find what you need quickly and easily. Below are some configurations available for file store and local artifact repository.

- On localhost

- On localhost with SQLite

- On localhost with tracking server

- On localhost with remote tracking server backing and artifact stores

- With the MLflow tracking server, accessing artifact storage is a breeze.

- The tracking server used exclusive access as a proxied access host for artifact storage access.

MLflow Project

This project helps you to organize and describe your code so that other data scientists or automated tools can run it. Each project is a directory of files, or a Git repository, containing your code.

It can automatically run some projects based on certain conventions (for example, a conda. yaml file is treated as a Conda environment).

Still, you can also add an MLproject file to describe your project in more detail. This file is written in YAML format. Each project specifies the following properties.

- Project Name

- Entry point

- Project Environment

- Project directories

- MLproject file

There are two ways to run MLflow projects: the MLflow runs the command-line tool or the MLflow. Projects.run() Python API. Both tools need the following information:

- Project URI

- Project version

- Entry point

- Parameters

- Deployment mode

- Environment

MLflow Models

This convenient and powerful format provides a standardized way to package, deploy, and share trained models on Apache Spark for real-time serving or batch processing. In addition, you can use this flexible framework that allows you to use different downstream tools easily.

- Storage format

- Model signature and input example

- Model API

- Built-in model flavors

- Community model flavors

- Model evaluation

- Model customization

- Built-in deployment tool

- Deployment to custom targets

MLflow Model Registry

The Model Registry is the go-to place for managing your machine learning models(APIs and UIs).

It enables you to store and track versions, analyze lineage across experiments, the transition between stages in product life cycles, and provide annotations. A few concepts of the model registry are as follows.

- Model

- Registered model

- Model version

- Model stage

- Annotations and descriptions

To store a model in the Model Registry, log it using one of its compatible flavors. Then you’re ready to go. From there, make all the changes and updates your heart desires – add new models or delete old ones with an intuitive UI or quick API commands.

Installation

- Installation can be done from PyPl via pip install.

- It requires Conda to be done on the Path for the project’s future.

- Nightly snapshots are also available.

- For the most efficient MLflow setup, grab a light version of it from PyPI – just”pip install MLflow-skinn”.

- Then you can add extra dependencies along the way depending on your desired outcome. For example, if you need an MLmodel supported by mlflow.pyfunc., simply do”pip install mlfow-skinny pandas nump” and achieve it.

Other information

| Founded in | 2018 in the United States |

| Users and organizations | Mid-size businesses, small businesses, enterprises, freelance, government, and non-profit organizations. |

| Language support | English |

| Integration | Amazon SageMaker, Azur Data Science, Google Cloud Platform, Tensorflow, Microsoft 365, navio, Docker, etc |

| Training | Amazon SageMaker, Azur Data Science, Google Cloud Platform, Tensorflow, Microsoft 365, navio, Docker, etc |

Pros and cons of MLflow

Pros

- Easy to track the performance of the training

- Easy-to-reproduce model versions

- Easy to modify lifecycle stages with ML

- Easy to deploy models to production

- Easy to Serve packaging models in a standard format

- Easy to Integrate with other tools

Cons

- Difficult to share experiments

- No scope for a multi-user environment

- Doesn’t offer Role-based access options

- Lack of advanced features

- Working models are not automatic

Alternatives

- Tensorflow

- Airflow

- Kubeflow

- DVC

- Seldon

- Pytorch

- Pandas

- Scikit learn

Where does Mlflow store data?

MLflow runs are recorded so you can keep track of them. They can be saved in files, a database, or sent to a tracking server. Usually, the MLflow Python API saves the data in the ml runs directory on your computer.

What are the stages of MLflow?

MLflow Model Registry has four stages: None, Staging, Production, and Archived. Each of these stages has its special meaning.

Conclusion

MLflow is a good tool for managing and deploying machine learning projects. It helps streamline the entire ML lifecycle with a simple setup and intuitive interface, enabling teams to reproduce results and collaborate easily.

Whether you are just getting started or have been working in this field for years, It provides a reliable platform that can be used to deploy machine learning models into production quickly.

We hope this blog post is efficiently provided you with all the details about MLflow ML software.